Now that read/write XMLA endpoints for Power BI Premium datasets are generally available, along with support for service principals, BI teams have the ability to implement customized automation processes aligned to their specific scenarios and requirements. One of the most common use cases for XMLA automation will be dataset processing (refreshes), which can be scheduled and managed outside of the Power BI service and optionally linked to or triggered from upstream jobs/pipelines/events.

In today’s blog we’ll look at the essentials needed to get started with automating the refresh of Power BI premium datasets via the XMLA endpoint (with service principal) and using Azure Automation as a resource to secure and manage these automation processes.

What Problems Are We Solving?

- Granular, custom refresh requirements

- A full dataset refresh, even if incremental refresh is configured for the fact tables, is not practical or efficient if only certain tables or partitions need to be processed.

- With the SqlServer PowerShell cmdlets such as Invoke-ProcessTable and Invoke-ProcessPartition, teams can develop scripts that only refresh what needs to be refreshed when it needs to be refreshed (based on a schedule, trigger, etc).

- Service Principal Authentication

- Service principal authentication is only supported for certain reader-only PBI admin APIs

- Therefore, if you want to use the Invoke-PowerBIRestMethod cmdlet or some other method to call the RefreshDatasetInGroup API, you’ll need to authenticate to the PBI Service with a user principal (not a service principal).

- Depending on your organization and its security policies, this can be a serious blocker as you don’t want important automation processes tied to one individual’s identity and it may not be easy to get a user service account created with the necessary permissions.

- Cloud architecture and integration

- Many organizations already have investments and experience with products like Azure SQL Database and Azure Analysis Services so XMLA endpoint makes it easy to leverage existing and familiar automation and management tools like Azure Automation and the SqlServer PowerShell module to integrate XMLA-based automation.

- Given the previously mentioned limitations with the Power BI API, PBI dataset automation processes like refresh requests may have been isolated from data source processes and/or deployed to Azure VMs or on-premises resources instead of cloud services.

What You Need to Get Started

As is often the case, the actual code to be developed and deployed is very simple. It’s the coordination with people (often in separate departments like security, identity, etc) and their processes and policies to obtain the necessary permissions and resources that can be the challenge. Hopefully this blog post will help you understand what you need and you can find people that will help you if you don’t have visibility or access to some of the requirements.

Additionally, there are a few little ‘gotchas’ that can be very frustrating that I’ve tried to call out. The following MS Docs article covers several of the following:

- Create a service service principal

- You may be able to create one in the Azure Active Directory blade

- Even if you can, you should probably check with your team or the identity/Azure AD team if there’s already a service principal that can be used.

- Securely document the application ID and client secret

- In some organizations, the preference or requirement may be to create and secure a certificate thumbprint.

- You may be able to create one in the Azure Active Directory blade

- Either A) assign the service principal to an existing Azure AD Security group or B) create a new Azure AD security group and add the service principal as a member

- In the Power BI Admin portal, enable the ‘Allow service principals to use Power BI APIs’ setting for the security group containing the service principal you’ll be using.

- You’ll need someone with the Power BI Administrator role to implement this.

- Add the security group containing the service principal to the Power BI Premium-hosted app workspace containing the dataset(s) you intend to process (refresh).

- The contributor or higher role should be sufficient for dataset operations.

- If you’re already a workspace admin or member of the workspace, you should be able to do this. If not, you may have the Power BI admin or team to add the security group to the

- Identify the server name associated with the Power BI premium workspace

- This should look like the following:

- powerbi://api.powerbi.com/v1.0/mycompanydomain/workspacename

- This should look like the following:

- Identify the name of the Power BI dataset hosted on this premium workspace to be refreshed.

- Also identify the names of tables and partitions that you’ll need to reference in your scripts if your scripts needs this granularity.

- If you plan to use Azure Automation to execute your XMLA scripts and to optionally secure and manage the associated credentials and common variables, you’ll need to provision an Automation Account or obtain access to an existing Azure Automation resource.

- Install or update the SqlServer PowerShell module to the latest version

- Both on your development machine and in your Azure Automation resource (if using it), you’ll want to update to version 21.1.18235 or higher of this module.

- Your script could be flawless and you have everything else configured properly but your script will fail if you don’t update this module.

- If you’re running PowerShell 7.1+ on your development machine, which I hope you are, as of this writing you need to import the SqlServer module from WindowsPowerShell to have access to the cmdlets (e.g. Invoke-ProcessTable) you need for processing Analysis Services databases (or PBI datasets)

- Import-Module SqlServer -UseWindowsPowerShell

- Both on your development machine and in your Azure Automation resource (if using it), you’ll want to update to version 21.1.18235 or higher of this module.

Test Script Components

At this point, you need to reference the resources and artifacts in the prior section in PowerShell script(s). In the following simple snippet of a local test, a PowerShell credential is created with the app id and secret of a service principal and then a process table command and a partition process command is submitted via service principal to the XMLA endpoint:

$SecPasswd = ConvertTo-SecureString $Secret -AsPlainText -Force

$PSCred = New-Object System.Management.Automation.PSCredential($AppID,$SecPasswd)

$Workspace = 'powerbi://api.powerbi.com/v1.0/mycompany.com/EDWDatasets'

$Database = 'GeneralLedger'

#Import SQLServer is required for PowerShell 7.1 as of today(2/21/2021)

Import-Module SqlServer -UseWindowsPowerShell

#Process (refresh) the facility dimension table

Invoke-ProcessTable -TableName 'Facility' -RefreshType Full -Server $Workspace

-DatabaseName $Database -Credential $PSCred -ServicePrincipal

#Process the 2014 partition of the GL Transaction fact table

Invoke-ProcessPartition -TableName 'GL Transaction' -PartitionName '2014' -RefreshType Full -Server $Workspace -Database $Database `

-Credential $PSCred -ServicePrincipal- This is just a local test example to get started

- In a production environment you can move the variable values like workspace and database outside the script

- The Azure Automation Deployment (the following section) mentions credentials and variable resources as one option to abstract away and secure these values

- In a production environment you can move the variable values like workspace and database outside the script

- Notice that these examples do not specify a tenantID or an applicationID

- These are optional parameters on the Invoke-Process cmdlets.

- To be the most explicit and robust, you may add these in your solution but it’s not required

- Notice that the Invoke-ProcessTable cmdlet has a DatabaseName parameter but the Invoke-ProcessPartition cmdlet has a Database parameter.

- The same value (name of the pbi dataset) can be used for both.

- RefreshType is a required parameter and its options are outside the scope of this post.

An even simpler command is to process (refresh) the entire dataset:

Invoke-ProcessASDatabase -Server $Workspace -DatabaseName $Database -RefreshType Full `

-Credential $PSCred -ServicePrincipalYou should get results in your PowerShell console like the following:

Azure Automation Deployment

You absolutely could schedule your XMLA PBI scripts with traditional scheduling tools like SQL Server Agent and/or integrate them in SSIS packages. However, given the various limitations with these approaches and the overall desire for a cloud service-oriented architecture, I assume Azure Automation will be a preferred choice for many organizations.

- In your Azure Automation resource, create a credential based on the application ID and secret of your service principal.

- Credentials can be found in the Shared Resources section of the Azure Automation blade.

- Confirm that you have an updated version of the SqlServer PowerShell module imported

- If you don’t have SqlServer or an updated version, you can import it via the Modules Gallery under SharedResources.

- Create encrypted variables to store the values for the name of the PBI premium workspace and the dataset

- Variables are also under the Shared Resources section

- If you plan to specify values for the ApplicationID and Tenant parameters of the processing cmdlets, you could encrypt these variable values as well for reuse in your scripts

- Create a new Azure Automation Runbook which references your stored/secured service principal credential and variables like the following sample which executes a process full for the entire dataset:

$PSCred = Get-AutomationPSCredential -Name 'PBIServicePrincipal'

$Workspace = Get-AutomationVariable -Name 'PBIWorkspace'

$Dataset = Get-AutomationVariable -Name 'PBIDataset'

Invoke-ProcessASDatabase -Server $Workspace -DatabaseName $Dataset -RefreshType Full `

-Credential $PSCred -ServicePrincipalIn this case, the premium workspace (an Analysis Services server), the dataset (an Analysis Services database), and the service principal credential are retrieved into the context of the Automation Runbook.

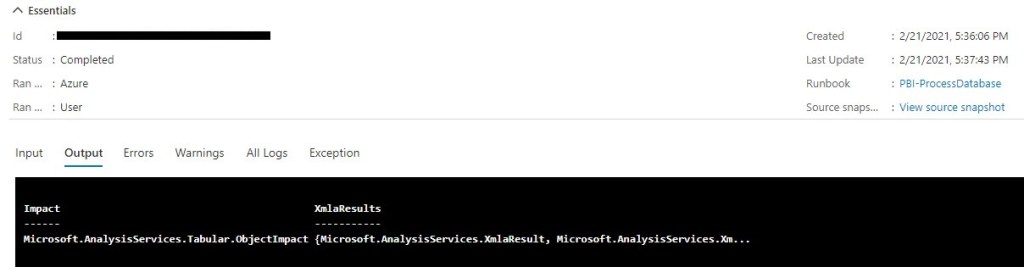

You should get a successful output message mentioning Analysis Services XMLA:

A couple more notes on this:

- Incremental refresh policies are respected by the XMLA commands

- For example, running a process database full will only refresh the partitions of a table that meet the requirements of the refresh policy configured for this table.

- Other tables without incremental refresh policies will be fully re-processed.

- If you have a scheduled dataset refresh configured in the Power BI service, the XMLA-based refresh operations will not (as of this writing at least) be reflected in the refresh history metadata exposed in the Power BI service.

- You can connect to the PBI dataset via XMLA endpoint in SSMS and view the properties of whichever objects (table, partition) were processed to further confirm that indeed these objects have been refreshed/processed.

Wrapping Up

The general availability of XMLA read/write, along with other recent enhancements such as large models, brings Power BI datasets up to the task of taking on enterprise-grade projects with high scale and complexity. For those of you that have been near Analysis Services for years, you know the value (and sometimes complexity) of unattended XMLA automation operations like rolling window refresh scripts, synchronization, and application lifecycle management (ALM) processes. Now with XMLA read/write and service principals, these same administrative and management processes can be applied to Power BI datasets. I’ll look to highlight other common scenarios of XMLA read/write (beyond data refresh) in future blog posts. Click ‘Follow’ if interested in subscribing to email notification for these future posts.

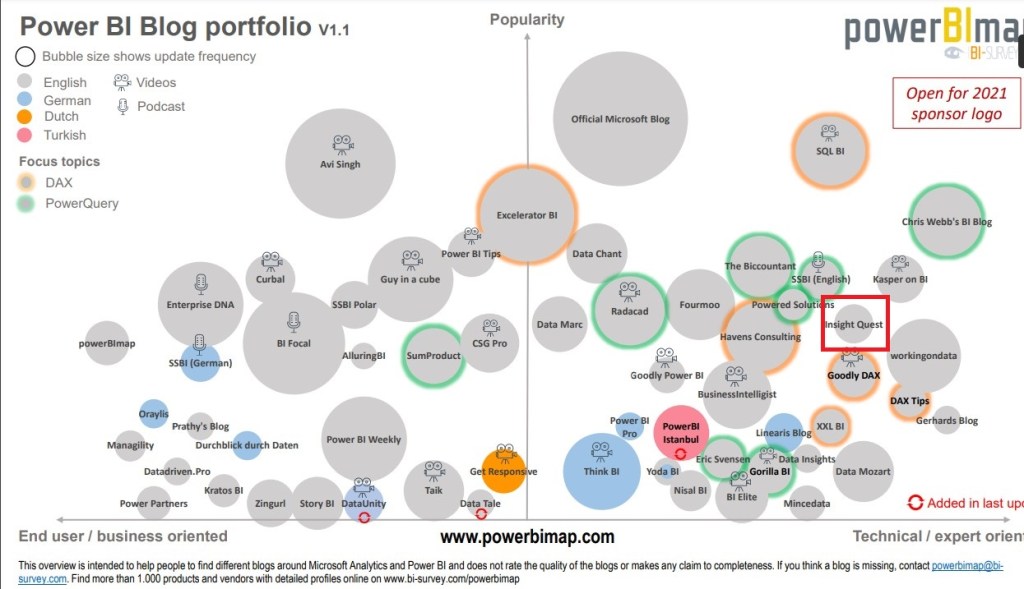

Bonus: Insight Quest’s Place in the Ecosystem

According to PowerBIMap, Insight Quest is one of the more popular Power BI blogs, particularly among the more technical Power BI blogs. Although it’s rewarding to see these results, as a competitive person I’d like to see Insight Quest make its way further to the top and right of the following visual. I suspect a larger bubble reflecting a greater volume of updates/posts will help accomplish this as we progress through 2021.

2 comments