In this week’s episode of Insight Quest, Brett, the team BI lead, has been assigned three work items related to the programmatic management of Azure Analysis Services and Power BI Embedded capacity resources:

The overall goal of this work is to better align provisioned BI resources in Azure with the workload and deliver acceptable or required performance and availability. The organization wants to take advantage of the elasticity of cloud resources.

For example, the organization may want or have the ability to pause (stop) the resources at certain scheduled intervals for cost reasons but scale up the resources (more CPU cores, more memory) at certain time intervals of the day or week to meet heightened usage. The scope of these tasks is just to build a few of the core scripting components to manage the resources. A later sprint may involve integrating or synchronizing the components together such as with triggers, parent-child runbook structures, and more.

Requirement Details

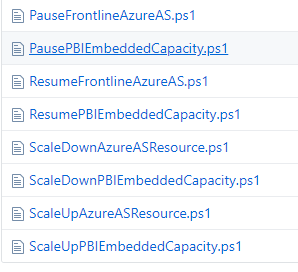

- Create two PowerShell scripts which will resume (re-start) an Azure Analysis Services resource and a Power BI Embedded resource, respectively, from a paused (stopped) state.

- Create two PowerShell scripts which will pause (stop) an Azure Analysis Services resource and a Power BI Embedded resource, respectively, from a running state.

- Create two ‘scale up’ PowerShell scripts which increase the resources available to the Azure Analysis Services resource and the Power BI Embedded capacity resource, respectively.

- The Azure AS script will increase the instance size by one instance level such as from an S0 to an S1 up to a maximum of an S4 (400 QPUs, 100 GBs of RAM) relative to the current instance running.

- The PBI Embedded capacity script, likewise, will increase the node type by one level such as from an A1 to an A2 node.

- Create two ‘scale down’ Powershell scripts which reduce the resources available to the Azure Analysis Services resource and the Power BI Embedded capacity resource, respectively.

- The Azure AS script will reduce the instance size by one level such as from an S1 with 100 QPUs and 25 GBs of RAM to an S0 instance with only 40 QPUs and 10 GBs of RAM.

- The PBI Embedded capacity script, likewise, will decrease the node type by one level such as from an A2 node with 2 virtual cores and 5 GB of RAM to an A1 node with 1 virtual core and 3 GB of RAM.

- Leverage the eight (8) PowerShell scripts created in steps 1 through 4 to create Azure Automation Runbooks.

- Utilize the stored variables and credential(s) in Azure Automation to configure and test each Runbook.

It’s absolutely possible, and perhaps a subject of a future blog post, to use other familiar on-premises automation and orchestration tools such as SQL Server Agent and SQL Server Integration Services (SSIS). For example, an SSIS package or a SQL Agent Job invoking the PowerShell scripts (steps 1-4) could be linked to a preceding package or job related to the data warehouse. In this scenario at least, we’ll assume the organization wants to avoid dependencies with on-premises infrastructure – they want a fully PaaS-based solution via Azure Automation.

Runbook Script Reference

The eight PowerShell scripts used as Runbooks in Azure Automation have been uploaded to GitHub: Azure Automation Runbook Scripts

As these files reference variables and credentials specific to Azure Automation, you would need to re-factor for execution outside of Azure Automation. As mentioned, this might be a topic in the future and I can provide examples and more context at that time.

Note: Be sure to import the necessary PowerShell modules to your Azure Automation account before creating the Runbooks for your environment. You need to import the AzureRM.PowerBIEmbedded and AzureRM.AnalysisServices modules and likely others for other related Runbooks such as processing/refreshing Analysis Services databases.

Script Logic

Per the included comments and numbers, the scripts follow the essential structure:

- Retrieve values from Azure Automation stored as variables or credentials

- These include the name of the resource group, the resource itself, the tenant ID, and the stored credential.

- Authenticate with an approved credential with rights to manage the resource

- Retrieve the current state of the resource such as whether it’s already running or paused and the current size of the resource (e.g. an S1 Azure AS instance).

- Based on the current state (#3), perform some action such as scale up or down to a particular level.

Start and Stop Scripts

Let’s ‘start’ with the Runbook script for starting up Azure Analysis Services

#1. Define variables to retrieve parameter values stored as Azure Automation variables or credentials $AzureASResource = Get-AutomationVariable -Name 'AzureASResource' $Tenant = Get-AutomationVariable -Name 'FrontlineTenant' $ResourceGroup = Get-AutomationVariable -Name 'BIResourceGroupName' $AzureCred = Get-AutomationPSCredential -Name "AzureASRefreshCred" #2. Authenticate with stored credential in Azure Automation Connect-AzureRmAccount -Credential $AzureCred -Tenant $Tenant -ServicePrincipal #3. Capture Azure AS Server state $ServerConfig = Get-AzureRmAnalysisServicesServer -ResourceGroupName $ResourceGroup -Name $AzureASResource $ServerState = $ServerConfig.State #4. Resume (start) the server if paused If($ServerState -eq "Paused") {Resume-AzureRmAnalysisServicesServer -Name $AzureASResource -ResourceGroupName $ResourceGroup} Else {Write-Host "Server is already running"}

To limit the length of this post, and since steps #1 and 2 are almost identical to the preceding example, I’ll just show steps #3 and 4 of stopping the Power BI embedded capacity:

#3. Capture Power BI Embedded state $CapacityConfig = Get-AzureRmPowerBIEmbeddedCapacity -ResourceGroupName $ResourceGroup -Name $PBIEmbeddedResource $CapacityState = $CapacityConfig.State #4. Pause capacity if running If($CapacityState -eq "Succeeded") {Suspend-AzureRmPowerBIEmbeddedCapacity -ResourceGroupName $ResourceGroup -Name $PBIEmbeddedResource} Else {Write-Host "Capacity is already paused"}

Notice that the two cmdlets Get-AzureRmAnalysisServicesServer and Get-AzureRmPowerBIEmbeddedCapacity are used to capture the current state of the given resources. Since we’re only starting or pausing the resources, we just check for “Paused” or “Succeeded” values from the State property.

Variables, which are stored in Azure Automation, are passed to the Resume and Suspend cmdlets for the given resource types to complete the operation.

In the event that the resource is already running or paused when a runbook to resume or pause the resource is executed (as a job), respectively, the job will not fail given the If-Else conditional logic.

Scale Up and Down Scripts

The scale up and down scripts go just a bit further than the start/pause scripts to also capture the current resource level (instance size or node type). With this info retrieved, the script then identifies the resource level (SKU) one level higher and passes this value to a cmdlet to scale up/down the resource.

#3. Capture server state and server instance size ("S0","S1","S2","S4") $ServerConfig = Get-AzureRmAnalysisServicesServer -ResourceGroupName $ResourceGroup -Name $AzureASResource $ServerSKU = $ServerConfig.Sku.Name $ServerState = $ServerConfig.State #4. Determine scale up instance size (one level higher to max of "S4") $ScaleUpSKU = If ($ServerSKU -eq "S0") {"S1"} ElseIf ($ServerSKU -eq "S1") {"S2"} Else {"S4"} #5. Scale up Azure AS resource if running If($ServerState -eq "Succeeded") {Set-AzureRmAnalysisServicesServer -Name $AzureASResource -ResourceGroupName $ResourceGroup -Sku $ScaleUpSKU} Else {Write-Host "Server instance isn't running"}

Per the above script, the current resource level (SKU) is stored as a variable and used as the basis for computing the scale up SKU. This variable is passed to the Set-AzureRmAnalysisServicesServer cmdlet along with the variables identifying the given Azure AS resource.

A couple notes:

- In many scenarios, a specific scale up and down resource level will be known in advance and thus there’s not a need to embed logic to determine these levels dynamically in the script.

- Keeping this logic in the script, however, does maintain a level of flexibility such as running the script multiple times based on various conditions/inputs. In other words, you could keep scaling up or down until certain thresholds are reached.

- Yes, there are larger Azure Analysis Services SKUs including an S8 or S9.

Scaling down the PBI Embedded capacity naturally follows very similar logic, just with cmdlets and variables specific to PBI Embedded:

#3. Capture PBI Embedded capacity state ("Paused" or Succeeded") and node size ("A1"...."A6") $CapacityConfig = Get-AzureRmPowerBIEmbeddedCapacity -ResourceGroupName $ResourceGroup -Name $PBIEmbeddedResource $CapacityState = $CapacityConfig.State $CapacitySKU = $CapacityConfig.Sku #4. Determine scale down node size $ScaleDownSKU = If ($CapacitySKU -eq "A6") {"A5"} ElseIf ($CapacitySKU -eq "A5") {"A4"} ElseIf ($CapacitySKU -eq "A4") {"A3"} ElseIf ($CapacitySKU -eq "A3") {"A2"} Else {"A1"} #5. Scale down one node size if the capacity is running If ($CapacityState -eq "Succeeded") {Update-AzureRmPowerBIEmbeddedCapacity -ResourceGroupName $ResourceGroup -Name $PBIEmbeddedResource -Sku $ScaleDownSKU} Else {Write-Host "Capacity node isn't running"}

Per the above code, the If-Else logic determines the Power BI Embedded capacity node type one level below the current state. This variable, $ScaleDownSKU, is passed to the Update-AzureRmPowerBIEmbeddedCapacity cmdlet. Again, the variables such as the resource group, resource name, and tenant are all stored in Azure Automation such that they can be centrally managed and used across multiple Runbooks.

Wrapping Up

With some fairly lightweight PowerShell scripts you can take control of your Azure BI resources and align the availability and scale to your needs. As always you’re welcome to share thoughts or questions. Each of the eight (8) Runbook scripts on GitHub have been through testing/validation but of course your scenario or approach could vary.

Next week’s blog will likely feature either Power Query and Dataflows or elements of an MSBI monitoring solution. You may consider following this blog if you find this content useful.

Smashing Pumpkins

I’m not sure if I’ll continue this with future posts but A) some readers/attendees seem interested in knowing something a bit more personal than the About Me slides and B) maybe a song would be a good change of pace to help close out technical blog posts.

Anyway, here’s one of my favorite songs:

2 comments